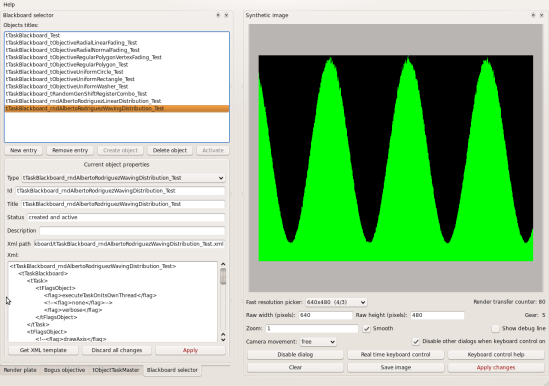

Custom probability distribution: fist of death update pack

2010-06-10 at 18:00 | Posted in Computer path | Leave a commentTags: 3D, art, C++, engine, fractal, maths, procedural, programming, pseudorandom, radiosity, random, ray tracing, raydiant, raytracing, realtime, render

You probably remember that post where a symbolic way to obtain your pseudorandom number generator with custom probability distribution from a homogenous pseudorandom number generator (= a normal one like for example the standard C function rand()) was shown. Just in case it is here. That’s all good but it uses integrals. So now and then, depending on the custom probability distribution you want, it is not practical/possible to symbolically integrate the formula. For example for discontinuous probability distributions or something waving (like the image at the bottom) it may not work. What to do in those cases?. If you want it bad you can have it with some numeric integration. Basically you supply the code with your desired probability density function then the code does its magic and you can now get your crazily distributed pseudorandom numbers. All along this code ‘t’ is used to designate pseudorandom numbers with the custom probability density and ‘r’ pseudorandom numbers with nominal constant probability density of 1. I’ve extracted just the interesting parts from my tProbabilityDistributionCrusher class so object bureaucracy is lost in favour of clarity. Here is the initialization code full of useful annotations:

/**

* this table is used to store values of:

* r = definite integral of E(x) between x=0 and x=t.

* (see 'Raydiant: custom probability distribution' post)

* The first element holds the value of the integral for t

* just greater than 0, the last element holds the

* value of the integral for t just less than 1.

* Any two consecutive elements have associated t values (not

* stored) separated exactly by 1.0/areaFrom0ToEachT.count.

*/

tArray *areaFrom0ToEachT;

/** areaFrom0ToEachT.count */

double realTableCount;

/** inverse of areaFrom0ToEachT.count */

double inverseTableCount;

/**

* stepPrecisionCount is the number of steps used for numeric

* integration, choose too few and that would give bad

* quality, choose to many and accumulative errors may grow

* high (but even for 10000000 they have been found to be

* negligible).

* Before compiling this function you must define your

* custom probability function customProbabilityDensityFunction()

* with anything you want

*/

void init(int integrationSteps)

{

// misc initialization

realTableCount = integrationSteps;

inverseTableCount = double(1.0/realTableCount);

// just use your favourite array class here:

areaFrom0ToEachT = new tArray(integrationSteps);

areaFrom0ToEachT->count = integrationSteps;

// fill numeric definite integration table

double area = 0;

for(int i=1;i<=integrationSteps;++i)

{

double t = double(i)*inverseTableCount;// (0,1]

assert(0.0<t && t<=1.0);

double height = customProbabilityDensityFunction(t);

assert(0<=height);

area += inverseTableCount*height;

(*areaFrom0ToEachT)[i-1] = area;

}

// lets normalize the area to 1.0

double invArea = double(1.0/area);

for(int i=0;i<integrationSteps;++i)

{

(*areaFrom0ToEachT)[i] *= invArea;

}

}

Here is an example definition of customProbabilityDensityFunction():

/**

* this defines a waving probability distribution, t is

* espected to be in the interval (0,1]

*/

double customProbabilityDensityFunction(const double& t)

{

return 0.1+cos(20.0*t)+1.0;

}

And now this is how to obtain the pseudorandom numbers:

/**

* returns a random number with the custom probability

* density function

*/

double get_t()

{

// get the nominal random number

assert(areaFrom0ToEachT && int(realTableCount)==areaFrom0ToEachT->count);

// rnd() must return a normal psudorandom number in the interval [0,1) :

double r = rnd();

// find where r is at our integration table, a plain binary search

// is performed here and lower bound ndx returned:

int ndx = areaFrom0ToEachT->getLowerBoundBinarySearch(r);

// deal with extreme values

if(ndx==areaFrom0ToEachT->count)

{

return 0.9999999999999;// just under 1.0

}

if(ndx==0)

{

return 0;

}

assert(0<ndx && ndxcount);

// lets calc two bounding t values for that place at our

// integration table

double t0 = double(ndx)*inverseTableCount;

assert(0.0<t0 && t0<1.0);

double t1 = double(ndx+1)*inverseTableCount;

assert(0.0<t1 && t1<=1.0);

// lets find the master interpolation coefficient between the

// two bounding t values

double complementaryFractional01 =

((*areaFrom0ToEachT)[ndx]-r)

/

((*areaFrom0ToEachT)[ndx]-(*areaFrom0ToEachT)[ndx-1]);

assert(0.0<=complementaryFractional01 && complementaryFractional01<1.0);

double interpolatedT =

double(t0*complementaryFractional01+t1*(1.0-complementaryFractional01));

assert(0.0<interpolatedT && interpolatedT<1.0);

return interpolatedT;

}

With this code you could easily obtain probability distributions like this one:

Good luck and thanks for listening!

Intimacity, rare tracks

2010-06-08 at 17:11 | Posted in Computer path | Leave a commentTags: 3D, art, C++, engine, fractal, global illumination, maths, path tracing, procedural, programming, radiosity, ray tracing, raydiant, raytracing, realtime, render

Over a hundred renders of the Intimacity landscape have been performed till now. Two of them have made it to the final stage and won the price (the price meaning being rendered at HQ and published at deviant art here and here) and at least one more is following soon (waiting for a Zalman cooling device for my main PC and another i7 is coming so I can keep programming while rendering with it). But there are a few of the unselected renders that are still interesting. Thankyou all for the support!

Closer fold-the-bar-three-leaf-clover

2010-06-01 at 17:56 | Posted in Computer path | Leave a commentTags: 3D, art, C++, engine, fractal, maths, procedural, programming, radiosity, ray tracing, raydiant, raytracing, realtime, render

Here, some close up views of this HQ render. These zooms have still pretty good resolution. The Raydiant engine uses no short cuts with global illumination, all effort is put to optimize the real worse case scenario. Make sure to view the images full size.

Blog at WordPress.com.

Entries and comments feeds.